Or Why Clarity in AI Prompts Matters…

Artificial intelligence has entered everyday use in healthcare and beyond. Doctors already explore AI for support in diagnostics, speech recognition, and patient communication. But outcomes depend heavily on how we phrase requests. Clear prompts give sharper results, while vague or overloaded ones create noise. Studies on clinical decision support highlight the same rule — structured inputs improve machine accuracy (NIH).

AI does not think or infer like humans. It follows patterns in training data. When we say “describe a hospital building,” the result is generic. When we specify “a modern hospital wing with MRI machines,” the output aligns with clinical needs.

The Role of Language Bias

Most neural networks are trained in English. This explains why prompts in English deliver more precise answers. Users who rely on other languages often face distortions. Translators such as DeepL or Google Translate help bridge the gap. For doctors in non-English speaking countries, this is not just convenience but necessity for accurate AI output.

For health tech companies, this also means an obligation to test tools in diverse languages. Without this step, adoption will remain uneven. Global medical research stresses that training bias affects outcomes, especially when used in sensitive fields such as radiology (The Lancet Digital Health).

Keeping Prompts Simple and Precise

Complicated instructions confuse AI. A long question with several conditions often produces incoherent text or images. Instead, splitting requests into steps works better.

For instance:

- Write a short patient summary.

- Add treatment recommendations.

- Use formal medical language.

Best Practices in Medical AI Prompting

Clarity, specificity, and simplicity are the three pillars of effective AI communication. Each plays a distinct role. Clarity ensures that the request is easy for the model to parse. Specificity narrows down possible interpretations, leading to more relevant answers. Simplicity keeps the structure straightforward, reducing the chance of confusion.

Negative phrasing such as “not happy” or “not inflamed” often creates errors. Models can misinterpret negations or invert the meaning. Using direct terms like “sad” or “inflamed” provides a stable anchor. In medical practice, avoiding double negatives is already standard, and the same applies to AI. Clear, affirmative wording strengthens both safety and efficiency.

Adding measurable details is equally important. Numbers give AI concrete guidance that abstract terms lack. For instance, “fever above 38 °C for 5 days” is more useful than “long fever.” Similarly, “patient takes 5 mg of drug X daily” is far stronger than “low dose of drug X.” These details align AI outputs with clinical expectations, making them more actionable.

Context also matters. AI often works better when provided with structured background. For example, instead of asking “suggest treatment,” a better prompt would be “suggest treatment options for a 45-year-old female with asthma, no prior hospitalizations, non-smoker.” This prevents generic responses and directs the AI toward relevant clinical guidelines.

Consistency in language further improves outcomes. Using the same terminology across multiple prompts helps the AI recognize patterns. For instance, always using “hypertension” instead of switching between “high blood pressure” and “HTN” reduces variability in the results.

Developers and clinicians now integrate specialized platforms that extend these principles. The Graphlogic Generative AI Platform includes retrieval-augmented generation, which allows AI to consult verified medical data instead of relying solely on pre-trained models. This reduces hallucinations in clinical queries, a common risk when working with generic large language models. The result is safer, more reliable text generation that aligns with real-world medical standards.

Another best practice is to frame prompts with outcome expectations. For instance, “write a 200-word summary with references to clinical guidelines” sets both length and quality criteria. This ensures that AI does not produce overly broad or incomplete answers. Adding output format instructions — bullet points, structured paragraphs, or tables — further increases usability.

In practice, effective medical prompting combines linguistic precision with technical awareness. Clinicians who adopt these methods save time, avoid misleading outputs, and maintain higher trust in AI support systems.

Handling Abstract or Complex Concepts

Concepts like “pain” or “fear” are hard for AI to process. Instead of vague terms, describing observable signs helps. For example: “patient holds chest and breathes rapidly” gives the AI a clinical anchor. In image generation, “elderly patient with oxygen mask in hospital bed” is more effective than “fragile health.”

This approach parallels clinical training, where abstract complaints are translated into measurable criteria (CDC).

Commands, Tools, and Outputs

Advanced AI platforms offer commands that let users control quality, style, and scope. Midjourney allows adjustment of aspect ratios and detail levels. Medical use cases may include visualizing anatomy or treatment workflows. At the same time, text-to-speech tools already play a role in patient communication. The Graphlogic Text-to-Speech API is an example of a system that can support multilingual hospital settings.

Common Errors and How to Avoid Them

When working with AI in healthcare, accuracy and clarity are essential. However, users often encounter predictable mistakes that reduce the quality of outputs and slow down clinical or research tasks. Below are the most frequent pitfalls, expanded with explanations and strategies to avoid them.

1. Overloading the AI with Complex, Multi-Layered Prompts

Many users try to ask everything at once — combining multiple questions, requesting different formats, and embedding contradictory requirements in a single prompt. This leads to confusion, fragmented answers, or overly generic text.

How to avoid:

- Break large tasks into smaller, sequential prompts.

- Use step-by-step instructions (“First summarize, then analyze, then suggest references”).

- Prioritize what is essential — clarity beats complexity.

2. Using Vague, Abstract, or Negative Language

Prompts like “Make this more professional” or “Don’t use technical words” leave too much room for interpretation. AI tools struggle with open-ended vagueness and may default to overly general responses. Similarly, negative phrasing (“Do not write long sentences”) is less effective than positive phrasing (“Write sentences under 12 words”).

How to avoid:

- Replace vague words with measurable instructions (e.g., “Limit to 150 words”).

- Favor positive framing of instructions.

- Provide a target audience or context (“Explain for a 12-year-old patient” vs. “Make it simple”).

3. Ignoring Language Bias in Training Data

AI systems may replicate biases from training data — for example, gendered assumptions in medical roles (“nurse = female, doctor = male”) or cultural gaps in health advice. If unchecked, this can mislead patients or undermine trust.

How to avoid:

- Be explicit about neutrality (“Use gender-neutral terms”).

- Request cultural inclusivity (“Provide dietary examples suitable for vegetarian patients”).

- Double-check sensitive outputs, especially in patient-facing materials.

4. Failing to Apply Available Tools or Commands

Many AI platforms support advanced features: structured outputs (tables, bullet lists), reference insertion, or external tool integrations (databases, calculators, image generators). Ignoring these reduces efficiency and precision.

How to avoid:

- Ask for structured outputs (“Provide a table with risk factors and prevalence”).

- Use follow-up prompts to refine results (“Add references in Vancouver style”).

- Explore multimodal commands (text + image, data + summary).

Reminder: Each of these errors reduces accuracy. Research in The BMJ highlights that precision and trust in AI adoption are directly linked — unclear prompts lead to weaker outputs and lower user confidence.

Practical Prompt Examples for Healthcare

Clear and structured prompts not only improve accuracy but also save valuable time in clinical, research, and educational tasks. Below are expanded examples that demonstrate how different communication strategies yield effective AI responses.

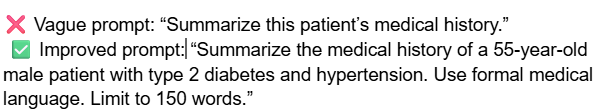

Example 1: Clinical Summary

Why it works: Word count ensures brevity, medical language ensures precision, and context ensures relevance.

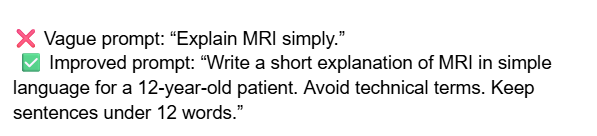

Example 2: Patient Education

Why it works: Clear audience targeting (child), readability limits, and style guidance reduce misunderstanding.

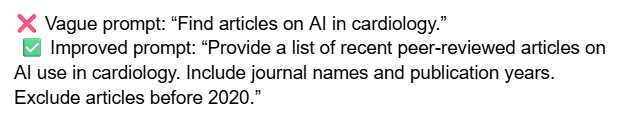

Example 3: Research Assistance

Why it works: Defines scope (peer-reviewed), timeframe (post-2020), and format (list with sources).

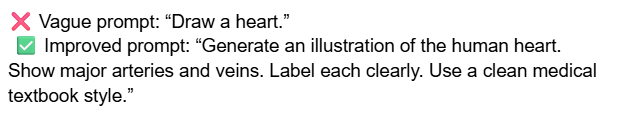

Example 4: Visual Prompt for Training

Why it works: Explicit instructions guide AI visualization toward clinically useful material.

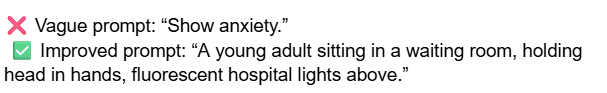

Example 5: Abstract to Concrete

Why it works: Translates abstract emotion into a specific, visual, context-driven scene.

Trends and Forecasts

AI in healthcare communication is evolving rapidly. What seemed experimental only a few years ago is now entering routine practice. Several key forces are driving this change — from clinical integration to regulatory oversight. Below is an expanded look at the three dominant trends, followed by longer-term forecasts.

1. Integration with Clinical Workflows

Hospitals around the world are increasingly testing AI-powered tools to support everyday clinical tasks. These include:

- Transcription and Documentation. Voice-to-text systems reduce the burden of manual note-taking. Clinicians in the U.S. and Europe already pilot systems that convert consultations into structured EHR entries. By 2027, most tertiary hospitals are expected to adopt automated transcription as a standard.

- Patient Education. Chatbots and interactive platforms provide tailored explanations of diagnoses, medications, and procedures. Instead of generic leaflets, patients receive conversational answers in simple language, adjusted to literacy level and age.

- Clinical Triage. AI systems assist in sorting incoming patient requests (e.g., phone lines, online portals), suggesting urgency levels and directing to appropriate specialists.

Forecast: Within the next 3 years, speech-to-text tools will likely become standard in outpatient settings. By the end of the decade, integrated AI “co-pilots” in EHR systems will proactively suggest billing codes, highlight missing information, and surface relevant guidelines. Physicians will not need to replace their routines but will increasingly delegate administrative burdens to AI.

2. Bias Reduction and Multilingual Support

One of the biggest challenges in healthcare AI is bias: tools trained primarily on English, Western-centric data risk producing errors for other populations. This limits reliability and fairness. Current trends show strong movement toward more inclusive datasets.

- Diverse Training Data. Leading research labs invest in multilingual and multi-ethnic data sources, aiming to reduce disparities in diagnostic recommendations and health communication.

- Equity in Global Health. Improved language coverage is critical for Asia, Africa, and Latin America, where the majority of the world’s population resides but resources are scarce. For example, AI that explains diabetes management in Hindi, Swahili, or Portuguese can drastically improve patient understanding.

- Cultural Context Awareness. Beyond translation, next-generation systems will adapt health advice to cultural practices (e.g., dietary habits, traditional medicine).

Forecast: By 2028, multilingual AI interfaces are expected to handle the majority of global patient queries with near-native fluency. This will bridge gaps in telemedicine, clinical research, and health education. Bias reduction will also be a key factor in regulatory approvals, with non-English performance metrics becoming mandatory in some regions.

3. Regulatory Pressure and Validation

As adoption accelerates, regulators step in to ensure safety and consistency. Agencies such as the FDA (U.S.), EMA (Europe), and national ministries of health are actively drafting frameworks for medical AI.

- Prompt and Output Validation. Just as drugs and devices undergo trials, AI prompts and responses may soon require standardized testing to prove accuracy and safety.

- Transparency and Explainability. Systems will likely need to disclose when outputs are AI-generated and provide traceability for references used.

- Quality Audits. Hospitals deploying AI for patient interaction may face regular audits to confirm compliance with data protection, safety, and equity standards.

Forecast: Between 2025 and 2030, regulatory compliance will become a central factor in AI adoption. Expect requirements for clinical-grade AI that passes external validation before use in high-risk environments (e.g., oncology, pediatrics). Vendors unable to meet these standards will face exclusion from healthcare markets.

Looking Ahead

The integration of AI into healthcare will not happen all at once but in progressive stages. Hospitals, universities, and regulators are shaping the pace of adoption. Below is a timeline that highlights the most likely milestones — from near-term implementation to long-term transformation of clinical practice.

| Year | Forecast |

| By 2027 | Most large hospitals will integrate AI into documentation, patient communication, and staff training. Prompt templates will be embedded into electronic health record (EHR) systems. |

| By 2030 | Prompt engineering will become a recognized skill for healthcare professionals, comparable to medical coding today. Medical schools may introduce modules on “clinical communication with AI.” |

| Beyond 2030 | Hybrid systems combining large language models with validated clinical databases (guidelines, drug formularies, genomic data) will dominate. They will act as assistants—surfacing evidence and reducing clerical burden—while leaving final judgment to clinicians. |

The overall trajectory points to AI as a trusted assistant, not a decision-maker. Its strength lies in scaling communication, reducing administrative workload, and improving patient understanding — all while keeping the human clinician at the center of care.

FAQ

No. But English gives the highest accuracy because most models are trained in it. Using a translator is often the best compromise.

Partially. Some terms are recognized, but rare abbreviations confuse systems. Plain wording improves results.

Yes. Long prompts increase confusion. Short and precise prompts yield faster and clearer answers.

Not fully. AI can assist but should not replace professional judgment. Clinical guidelines remain the standard.

Yes. For visualization tasks, controlling detail and format helps create usable medical graphics.